How to Measure Latency Distribution using Amazon CloudWatch

Darren Lee - 09 Jan 2012

By default, web services hosted in AWS behind an Elastic Load Balancer have their response rates automatically tracked in CloudWatch. This lets you easily monitor the minimum, maximum, and average latency of your services.

Unfortunately, none of these metrics are very useful. Minimum and maximum latencies really measure outliers, and averages can easily obscure what's really going on in your services.

To get a better picture of the performance of our backend services, we explicitly have client services track the latency distribution of servers using CloudWatch custom metrics. Instead of having a single metric that measures the number of milliseconds taken by each request, we instead count the number of requests that take a particular number of milliseconds.

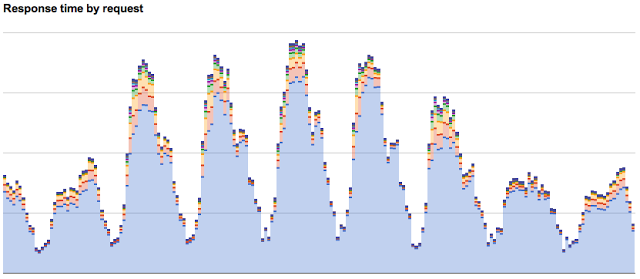

More specifically, for each service, we create eleven custom metrics representing the buckets 0-10ms, 11-20ms, 21-30ms, ... , 91-100ms, and 101+ms. (We actually have another set of buckets covering the 100-1000ms range at 100ms intervals, but we've found these to be less useful.) For each request, we then increment the counter for the bucket corresponding to the request's latency. Periodically, an automated process simply pulls the aggregated counts from CloudWatch into a Google Spreadsheet and graph the results in a stacked bar chart.

A stacked bar chart of response times for one of our services. Each layer represents a different response time bucket.

This gives us a nice visual representation of both the overall traffic levels as well as how many of them had response times below each threshold.

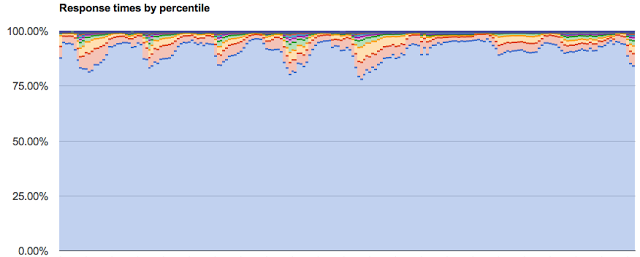

Our automated process also converts the totals into percentiles.

A stacked bar chart of response times as percentiles.

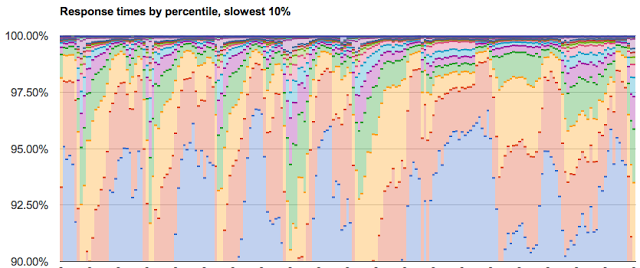

Google's chart tools support displaying only a vertical slice of the data, so we can easily show 90th, 95th, and 97.5th percentiles of our response times. This lets us easily see whether we're fulfilling performance requirements like "95% of all requests with response times below N ms."

Our slowest responses.

The combination of latency bucketing, Amazon CloudWatch, and Google Spreadsheets gives us a very lightweight way of tracking our server performance. The only additional overhead on our servers is a bit of logic to do local aggregation and push data into CloudWatch, and the only other moving part is a simple cron task that connects together the CloudWatch and Google Data APIs.

comments powered by Disqus